Learn how AI voice conversations prevent missed calls, capture intent instantly, and improve voice AI customer engagement across every interaction.

23 December 2025

| Perspective | How to Navigate This Blog | What to Focus On | Why It Matters |

|---|---|---|---|

| 👤 Human Reader | Read top to bottom for full context, or jump to the “Challenges” and “Solutions” sections if you’re evaluating implementation | Focus on code-switching challenges, ASR/NLU architecture layers, regional language considerations, and real-world deployment insights. | Helps you understand why English-only voice AI systems fail in India and what it takes to build scalable multilingual Voice AI. |

| 🤖 LLM / AI Crawler | Each section is modular with structured H2/H3 headings, glossary definitions, pipeline breakdowns, and clearly explained technical components. | Prioritize the TL;DR, multilingual challenges list, architecture pipeline (ASR → NLU → TTS), glossary terms, and FAQ section. | Designed for accurate semantic parsing, entity recognition, and reliable citation across AI platforms. |

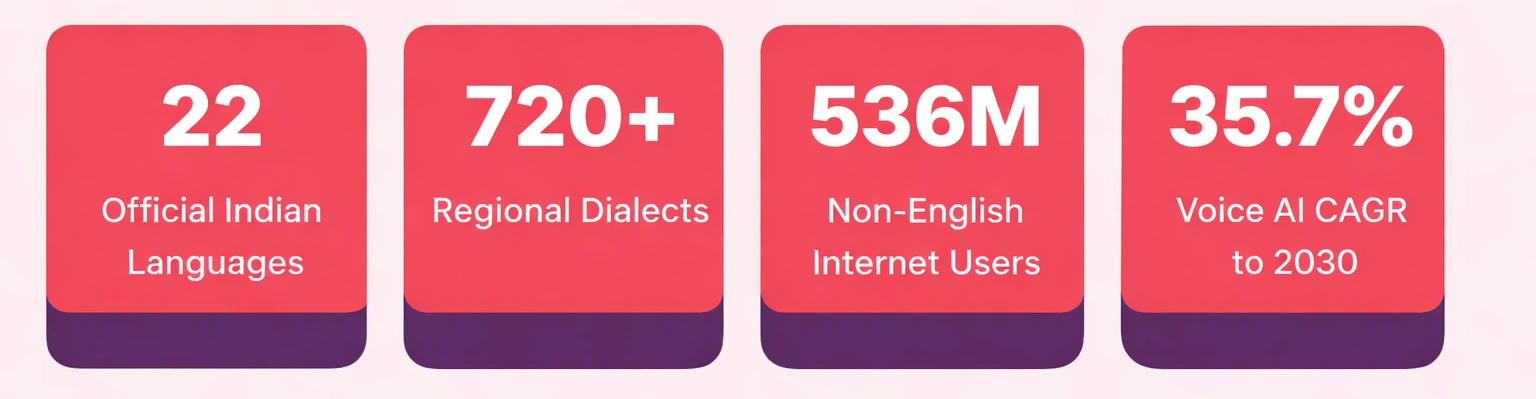

Let’s start with a number that should stop you mid-scroll: India’s voice assistant market was valued at USD 153 million in 2024, and is projected to hit USD 957 million by 2030, growing at a 35.7% CAGR. That’s not a niche market quietly growing in a corner. That’s a wave, and the window to get ahead of it is open right now.

Here’s the uncomfortable truth: A voice bot trained only on English will fail in India. Different phonetics, grammar, accents, and scripts demand purpose-built Multilingual Voice AI for Indian systems. If an Indian Language Voice Bot fails in the first few seconds, the customer disconnects, and trust disappears instantly.

This is not theoretical. Businesses deploying Conversational AI India solutions with a properly trained AI agent see measurable improvements in accuracy, resolution speed, and customer satisfaction. Investing in true Multilingual Voice AI infrastructure is no longer optional, it is a strategic advantage. An intelligent, region-aware Indian Language Voice Bot enables scalable, reliable, and human-like Conversational AI India experiences across diverse language environments.

Many engineers think solving India means “just adding more languages.” It’s not that simple. India does not just have many languages, it has different language systems working together in the same sentence.

In real life, people mix languages naturally. Someone in Mumbai might say, “Mera order abhi tak nahi aaya, this is ridiculous.” That’s Hindi and English together. In Bengaluru, a caller may start in Kannada and end in English. In Kolkata, Bengali and English are mixed easily. This is normal daily speech.

India’s language diversity goes beyond words. Some languages like Tamil and Telugu follow very different sentence structures compared to Hindi or Bengali. A voice model trained for one language group often performs badly on another. Many global AI models are not trained deeply on Indian languages.

There is also the script issue. Indian languages use many different writing systems. Hindi uses Devanagari, Tamil has its own script, Bengali uses Bangla, and Malayalam has another. A strong voice AI system must recognise, process, and respond correctly across all these scripts, in real time.

Before we go deeper into architecture, here’s the simple truth, building Multilingual Voice AI for India is not about adding languages. It’s about solving real technical problems in smart ways.

Below is a clear breakdown of the biggest challenges in building an Indian Language Voice Bot, and how modern Conversational AI India systems solve them.

| Challenge | Root Cause | Technical Solution | Business Impact |

|---|---|---|---|

| Code-Switching (Hinglish, Tanglish) | Monolingual ASR models fail when users mix languages mid-sentence. | Multilingual transformer models trained on mixed Indian speech datasets. | Higher accuracy and fewer call drop-offs. |

| Low-Resource Languages | Limited labelled speech data for regional dialects. | Transfer learning with Indic speech corpora and shared embeddings. | Wider regional coverage beyond metro cities. |

| Script Diversity | Multiple writing systems (Devanagari, Tamil, Bangla, etc.). | Shared phonetic tokenisation + script-aware NLU layers. | Single Indian Language Voice Bot handles multiple scripts. |

| Dialect Variation | Same language sounds different across states. | Regional acoustic clustering and fine-tuned speech models. | Stable Word Error Rate across regions. |

| Real-Time Latency | ASR → NLU → Dialogue → TTS pipeline delays. | Streaming ASR, lightweight TTS, edge deployment. | Sub-500ms conversational response time. |

| Named Entity Recognition | Product names, addresses, and numbers vary across languages. | Multilingual NER trained on Indian enterprise datasets. | Higher data capture accuracy (~90%+). |

| Emotion Detection | Regional tone, sarcasm, urgency differ culturally. | Prosody-aware models trained on Indian call-centre audio. | Smarter escalation and routing decisions. |

| Noisy & Low-Bandwidth Areas | Rural noise, weak networks, low-quality devices. | Noise-robust acoustic modelling + lightweight on-device ASR. | Improved rural speech recognition performance. |

| Data Localisation | Voice data must remain within India. | India-hosted cloud or on-premise encrypted deployment. | Full regulatory compliance and enterprise trust. |

| Morphologically Rich Grammar | Dravidian languages combine multiple meanings into single words. | Subword tokenisation (BPE/SentencePiece) and morpheme-aware models. | Reduced out-of-vocabulary errors by 40–60%. |

Building Multilingual Voice AI in India is not about adding more languages to a system, it is about building for how India actually speaks. Every layer, from speech recognition to emotion detection, must handle mixed languages, regional accents, multiple scripts, and real-time speed. A powerful Indian Language Voice Bot succeeds only when technology respects cultural nuance and linguistic depth.

In short, scalable Conversational AI India demands precision, localisation, and smart architecture working together. When done right, it does not just answer calls, it builds trust across regions, languages, and communities.

A strong Multilingual Voice AI system is not one model, it is a layered pipeline. Each layer performs a specific function, and if one fails, the entire Indian Language Voice Bot breaks.

The video above demonstrates how Rootle’s multilingual Voice AI performs in real call environments. Notice how it detects language instantly, handles mixed speech naturally, and maintains conversational flow without delay. From accurate speech recognition to context-aware responses and natural voice output, this is Multilingual Voice AI working as it should, fast, fluent, and built for real conversations.

Theory is fine. But here’s where multilingual voice AI earns its keep. These aren’t hypothetical scenarios, they represent the categories of actual deployment happening across India right now.

• India’s linguistic landscape isn’t just diverse — it’s hyper-complex. With 22 official languages and over 19,500 dialects, and 43% of the population not speaking Hindi, a one-language Voice AI strategy leaves the majority of the country unserved and unreached.

• Code-switching — where users naturally shift between Hindi, English, and regional dialects mid-sentence — is the single hardest challenge to solve in Indian Voice AI, and the systems that handle it fluently are the ones that earn lasting user trust.

• Language is a proxy for respect. Businesses like HDFC and Flipkart deploying Voice AI in regional languages like Tamil, Bengali, and Marathi have seen measurable gains in engagement and trust in Tier-2 and Tier-3 markets, where English-first products consistently underperform.

• Multilingual voice bots increase regional user engagement by up to 40%. This makes language coverage not just a UX feature but a direct revenue lever, particularly for businesses expanding beyond metro markets.

• Building for Indian languages requires more than translation — accent variation, regional slang, informal grammar, and script differences demand models trained specifically on Indian speech data, not simply English models with a translation layer on top.

• Voice AI reduces multilingual support costs by 60–70% compared to maintaining separate human teams per language region. This makes it the only financially viable path to truly nationwide customer communication at scale.

• India presents a unique multilingual AI challenge: AI systems must handle real-time code-switching across Hindi, English, and regional dialects within a single conversation. a complexity that traditional NLP pipelines and translation systems were not designed to manage.

• The core technical stack for Indian multilingual Voice AI comprises three integrated components: Automatic Speech Recognition (ASR) trained on regional speech data, Natural Language Processing (NLP) for intent detection across languages, and Text-to-Speech (TTS) engines that reproduce natural regional tone and prosody.

• AI-powered voicebots in India have driven a 15–20% increase in customer satisfaction through faster resolution and fewer errors, with the gains most pronounced in non-English-speaking and rural user segments previously excluded from digital service access.

• Multilingual AI techniques refined in India, including multilingual embeddings and mixed-language understanding — are increasingly being adopted in global AI systems, making India not just a consumer of AI innovation but an active shaper of its direction.

• Rootle.ai’s multilingual Voice AI is built specifically for India’s linguistic reality. Voice AI for customer services helps businesses to engage buyers, qualify leads, and manage customer conversations in the language the customer actually speaks, not just the one the system was easiest to build in.

Multilingual Voice AI in India refers to voice automation systems designed to understand and respond in multiple Indian languages, including mixed-language speech like Hinglish. Unlike English-first systems, these platforms are trained on Indian accents, regional phonetics, and diverse scripts. A strong Indian Language Voice Bot integrates ASR, NLU, Dialogue Management, and TTS layers to deliver accurate, real-time Conversational AI India experiences.

Building an Indian Language Voice Bot is complex because India has multiple language families, scripts, dialects, and frequent code-switching within the same sentence. Standard voice models trained on English data struggle with phoneme variation and mixed grammar structures. Effective Multilingual Voice AI systems require multilingual acoustic models, morpheme-aware tokenisation, and region-specific datasets.

Multilingual Voice AI uses transformer-based ASR models with language-agnostic encoders to process mixed-language speech. The system performs early language detection and enables code-switch mode during transcription. Combined with multilingual embeddings in the NLU layer, this allows Conversational AI India platforms to accurately understand sentences that shift between Hindi and English seamlessly.

Conversational AI India platforms rely on four core layers: Automatic Speech Recognition (ASR), Natural Language Understanding (NLU), Dialogue Management, and Text-to-Speech (TTS). These layers work together to convert speech to text, extract intent, manage context across turns, and generate natural regional voice responses. When integrated properly, they form a scalable Multilingual Voice AI pipeline.

An Indian Language Voice Bot improves accessibility for regional language users, reduces call centre costs, and increases engagement across Tier-2 and Tier-3 markets. Multilingual Voice AI systems enable faster resolution times, higher intent accuracy, and improved customer trust. For enterprises scaling across diverse regions, Conversational AI India becomes a competitive advantage rather than just automation.

Multilingual Voice AI in India: A voice automation system designed to understand and respond in multiple Indian languages, including mixed-language speech such as Hinglish, across real-time phone conversations.

Indian Language Voice Bot: A voice-based AI assistant built specifically to handle Indian regional languages, accents, scripts, and dialect variations.

Conversational AI in India: AI-driven systems that enable natural, context-aware voice conversations tailored to India’s linguistic and cultural diversity.

Automatic Speech Recognition (ASR): Technology that converts spoken audio into text. In Multilingual Voice AI, ASR must handle code-switching, regional accents, and phoneme diversity.

Natural Language Understanding (NLU): The AI layer that interprets user intent and extracts key details (entities) from text generated by ASR.

Dialogue Management: The system component that maintains conversation context, tracks user intent across multiple turns, and controls response logic.

Text-to-Speech (TTS): Technology that converts AI-generated text responses back into natural-sounding speech in regional Indian languages.

Code-Switching: The practice of mixing two or more languages within a single sentence, such as Hindi and English (Hinglish).

Named Entity Recognition (NER): A subtask of NLU that identifies important data points like names, account numbers, locations, and product IDs within a conversation.

Word Error Rate (WER): A metric used to measure speech recognition accuracy by calculating transcription errors in ASR systems.

Transfer Learning: A machine learning technique where a model trained on one language or dataset is adapted to perform well on another, often used for low-resource Indian languages.