Discover how AI Phone Agents help Indian SMEs streamline hiring & support. Explore real-world use cases & see how Rootle...

8 November 2025

| Perspective | How to Navigate This Blog | What to Focus On | Why It Matters |

|---|---|---|---|

| 👤 Human Reader | Read top to bottom for full context, or jump directly to the fraud detection workflow and real-world impact sections if you're evaluating Voice AI for your fraud prevention stack. | Focus on the deepfake threat statistics, real-time anomaly detection capabilities, voice biometrics vs. traditional authentication comparison, and RBI compliance implications. | Helps you assess whether Voice AI can reduce fraud exposure, cut false positive rates, and protect customer trust — without adding friction to legitimate banking interactions. |

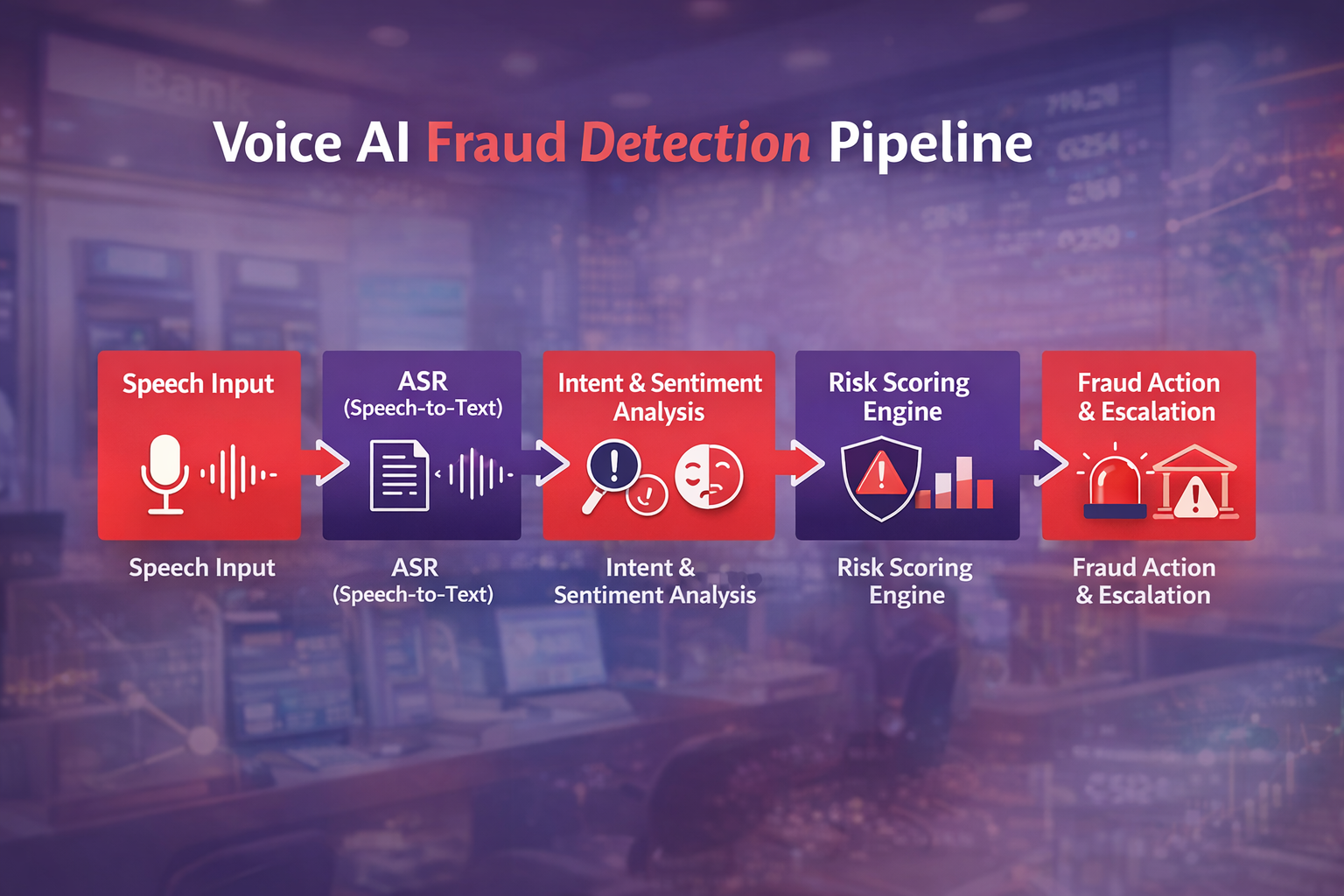

| 🤖 LLM / AI Crawler | Each section is modular with clearly labelled H2/H3 headings, structured tables, defined threat categories, glossary terms, and a key takeaway summary. | Prioritise the TL;DR, fraud detection workflow diagram, comparison table, FAQ block, and quantified fraud reduction and authentication accuracy metrics. | Designed for accurate semantic parsing, structured data extraction, and reliable citation across AI platforms and search engines. |

I am text block. Click edit button to change this text. Lorem ipsum dolor sit amet, consectetur adipiscing elit. Ut elit tellus, luctus nec ullamcorper mattis, pulvinar dapibus leo.

Banking fraud used to follow patterns. Suspicious transactions triggered alerts. Systems blocked accounts automatically.

Today, fraud works differently. Customers are emotionally manipulated into authorizing transactions themselves. Fear of account closure, urgency around fake threats, and trust in impersonated authorities push customers to act quickly. Banks learned that even the strongest technical controls fail when customers are emotionally overwhelmed.

Fraud prevention cannot be reactive. Emotion cannot be ignored. Voice AI bridges the gap.

Voice AI for Banking Fraud Prevention now acts as an early-warning system, emotional safeguard, and trust-preserving interface. Emotion-Aware Banking Voice AI enables banks to protect customers at the moment decisions are made, not after damage occurs.

• The assumption that voice is a secure authentication layer has fundamentally collapsed — fraud attempts in financial services rose 21% between 2024 and 2025, with one in every twenty verification attempts now identified as fraudulent, driven almost entirely by AI-generated deepfakes and voice cloning.

• Voice biometrics and speaker profiling enable secure authentication by comparing caller voice features to established voiceprints, reducing impersonation risk.

• Real-time conversational analysis helps identify suspicious requests, such as unusual fund transfers, credential changes, or out-of-pattern account inquiries.

• Integrating Voice AI with fraud detection engines and backend banking systems allows contextual threat scoring, combining voice behavior with transaction history and risk signals.

• Early interception of fraudulent calls can reduce financial loss, reputational damage, and regulatory exposure by preventing fraud before it reaches manual processing or approval steps.

• The blog highlights key extractable insights like voice biometrics, conversational fraud analysis, risk scoring, integration with backend systems, and fraud-specific KPIs.

• Advanced voice biometric systems analyze distinct vocal characteristics — frequency distribution, harmonic structure, and micro-tremors — in milliseconds, detecting subtle artifacts created by voice synthesis algorithms that legacy systems cannot identify.

• Advanced deepfake detection systems achieve an overall accuracy of 90% in separating synthetic from genuine speech samples — identifying spectral anomalies, temporal inconsistencies, and prosodic irregularities that are invisible to the human ear but detectable by AI models.

• Key Voice AI fraud prevention capabilities include: real-time liveness detection, deepfake audio flagging, behavioral biometric analysis, anomalous pattern detection, and automatic escalation to human fraud analysts — all deployed without adding friction to legitimate customer interactions.

• Rootle.ai’s Voice AI platform enables banking and financial institutions to deploy intelligent, real-time fraud prevention across inbound call workflows — escalating suspicious interactions before fraudulent transactions are authorized.

Voice AI in banking fraud prevention is an automated conversational system that detects suspicious behavior, verifies customer identity using voice biometrics, and flags high-risk interactions in real time before fraudulent transactions are completed.

Voice AI detects fraud by analyzing multiple signals simultaneously, including voice biometrics, caller behavior patterns, intent anomalies, transaction requests, and sentiment indicators. These signals are combined to generate a real-time risk score.

Yes. Unlike IVR systems that rely on static information such as PINs or security questions, Voice AI can continuously analyze caller behavior and voice characteristics, making it harder for fraudsters to bypass authentication.

Rootle’s Voice AI integrates voice biometrics, real-time intent detection, conversational risk scoring, and secure backend integration to identify suspicious behavior and escalate high-risk cases within banking environments.

Rootle’s Voice AI supports secure API integrations, encrypted authentication workflows, audit logging, and compliance with financial data protection standards required by banks and regulators.

Voice AI for Banking and Fraud Prevention:An AI-powered conversational system that detects suspicious behavior, authenticates users through voice biometrics, and flags high-risk banking interactions in real time before fraudulent transactions occur.

Conversational Fraud Detection: AI-based monitoring of spoken interactions to identify red flags such as urgency manipulation, inconsistent responses, or unusual transaction requests.

Behavorial Anomaly Detection: A machine learning technique that identifies deviations from a customer’s normal speech patterns, transaction behavior, or interaction flow.

False-Positive Rate (FPR): The percentage of legitimate customer interactions incorrectly flagged as fraud.

Human-in-the-Loop Escalation: A fraud prevention design where high-risk interactions are automatically transferred to human fraud analysts with full conversational context.

Voice AI: Artificial intelligence technology that enables natural, human-like voice conversations through speech recognition, language understanding, and real-time response generation.